One endpoint. Every model. Zero downtime.

Orbyt is a self-hosted LLM gateway. Route, retry, and observe every request to every major AI provider through a single OpenAI-compatible endpoint.

Routes to every major model

Built for production

Three pillars: interoperability, reliability, transparency.

Every part of the gateway exists to remove friction between your application and the model. Nothing more.

When one provider blinks, the next takes over.

Define a primary model and a fallback chain. The decision engine cascades on rate limits, 5xx errors, and timeouts — your client never sees the failure.

- Configurable retry policy with exponential backoff

- Per-request fallback_models override

- Strategies: cheap, fast, reliable, or provider-locked

Resilience built into the network layer.

A Redis-leased key pool, a global rate limiter, and a decision engine triage every failure mode before it reaches your application. SSE streaming is normalized across providers.

- Health-ranked API keys leased per request

- Unified streaming format across every provider

- Hard errors triaged: provider-exhausted vs. model-exhausted

Your keys. Your data. Your infra.

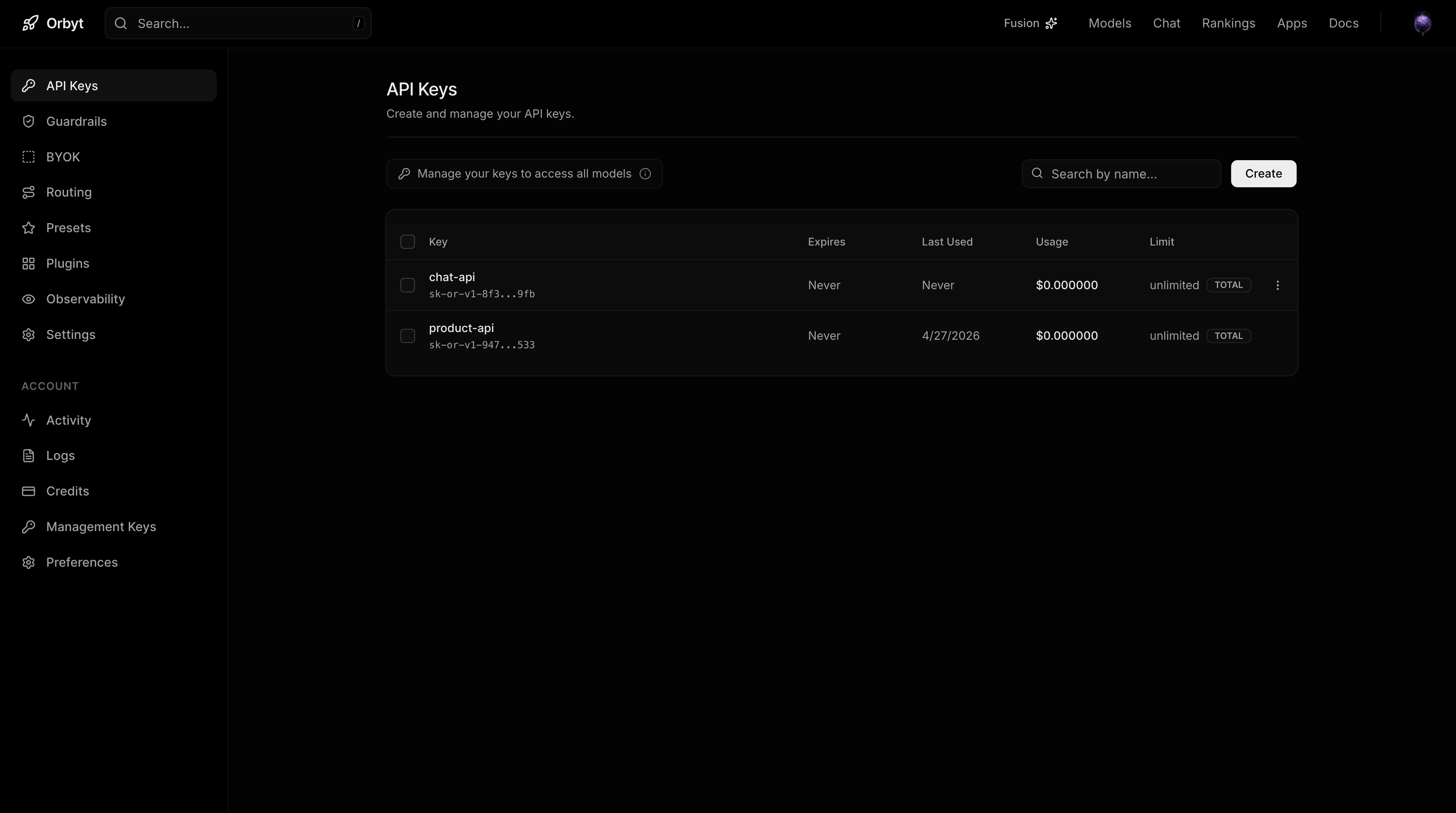

Orbyt runs on your infrastructure. Bearer tokens are scoped per project, telemetry persists to your Postgres, and you control rotation, revocation, and audit logs end to end.

- Scoped Bearer tokens with instant revocation

- Telemetry persisted to your own Postgres

- OpenAI-compatible — switch with two lines of config

On the roadmap

Shipping next — track progress in the changelog.

Models

Route to every major model.

Define your primary, configure fallbacks, and let the engine handle the rest. New providers ship behind the same endpoint.

Two lines to switch from OpenAI.

Point your existing OpenAI SDK at the Orbyt gateway. Add an extra block to declare fallbacks, routing strategy, and retry policy per request.

import OpenAI from "openai"; const openai = new OpenAI({ baseURL: "https://openrouter-clone-api-gateway.onrender.com/v1", apiKey: "gateway-sk-12345", }); const response = await openai.chat.completions.create({ model: "google/gemini-3.1-pro", messages: [ { role: "system", content: "You are a helpful assistant." }, { role: "user", content: "What is the capital of Germany?" } ], temperature: 0.7, // Orbyt extensions extra: { fallback_models: [ "anthropic/claude-3-haiku", "google/gemini-2.5-flash" ], provider: "cheap", retry: 3 } });

Pipeline

The lifecycle of every request.

Deterministic flow. Every layer isolates faults at its origin so transient errors never propagate to the client.

Rate limit

A global limiter enforces traffic bounds before a request enters the routing pipeline.

Select provider

The provider selector evaluates strategy (cheap, fast, reliable) and your fallback chain.

Lease + execute

A health-ranked API key is leased from the Redis pool and the request hits the provider.

Normalize & stream

Provider chunks are normalized to a single SSE format. Telemetry persists asynchronously.

Tracing

See every request, end to end.

DevTools shows you live status, payloads, latency, and the exact routing decision the engine made — for every request.

Open DevToolsShip LLM features

without shipping the chaos.

Self-host Orbyt in minutes. Point your existing OpenAI client at it. Sleep through the next provider outage.